Previewing and Recording (New for v3.3)

After connecting with LIVE FACE and getting familiar with it, you can connect your CrazyTalk Animator character to real actors to control your CTA character's expressions.

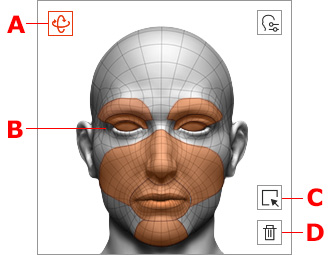

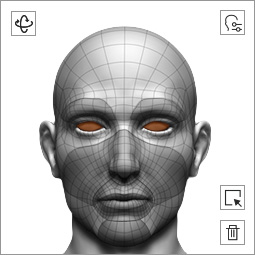

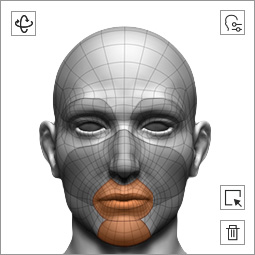

By using the Facial Mocap Controls in the mask pane, you can mask out unwanted facial features on the dummy, and extract specific facial motions.

- Head Orientation: Select it for recording the head rotation.

- Facial Features Mask: Select different areas of the mask for recording movements of the corresponding facial features.

- Select All: Select all facial features of the mask, including the head orientation, for recording the movements.

- Clear: Deselect all facial features of the mask, including the head orientation, for recording the movements.

By default, all the face features are selected for full face capturing.

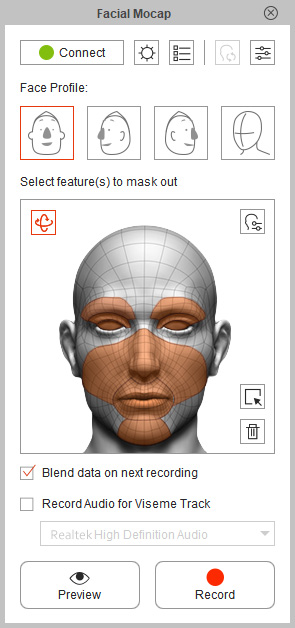

- Open the LIVE FACE on your iPhone X. For more information about LIVE FACE, please refer to the Workflow for Facial Mocap section.

-

Return to CrazyTalk Animator. Apply a character and make sure it is selected.

- Click the Facial Mocap

button on the Functional Tool Bar to open the Facial Mocap panel (Animation menu >> Facial Mocap).

button on the Functional Tool Bar to open the Facial Mocap panel (Animation menu >> Facial Mocap).

-

Click the Preview

button to preview the motion pattern (Shortcut: Space bar).

button to preview the motion pattern (Shortcut: Space bar).

-

Click the Record

button to start recording.

button to start recording.

Real human expression.

All face features receive the movement data.

You can use the multi-layer technique to separately record facial expressions for each facial feature instead of recording with the entire face. This is especially useful when a character has a start pose and you wish to gradually add expressions to facial features for various expressions.

- Open the Live Face app on your iPhone X. For more information about Live Face, please refer to the Workflow for Facial Mocap section.

-

Return to CrazyTalk Animator. Apply a character and make sure it is selected.

- Click the Facial Mocap

button on the Functional Tool Bar to open the Facial Mocap panel (Animation menu >> Facial Mocap).

button on the Functional Tool Bar to open the Facial Mocap panel (Animation menu >> Facial Mocap).

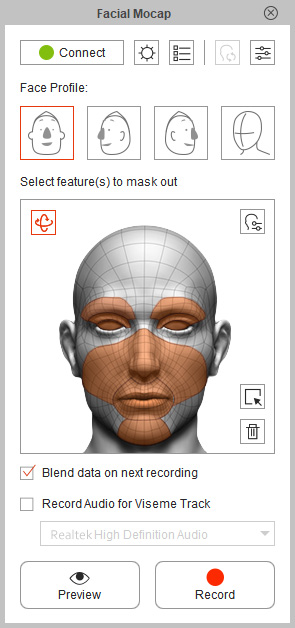

- Activate the Blend data on next recording checkbox. If it is not activated, then each recording will overwrite the last.

- Deselect the all facial features. Select one of the facial features for the dummy pane, in this case, the eye balls.

-

Click the Preview

button to preview the motion pattern (Shortcut: Space bar).

button to preview the motion pattern (Shortcut: Space bar).

-

Click the Record

button to start recording.

button to start recording.

Real human expression.

Only the eye balls receive the movement data.

- Go to the start frame where you recorded the previous expression.

-

Deselect the all facial features. Select another one or several facial features from the dummy pane (in this case, the mouth and jaw).

-

Preview and record again.

Real human expression.

Only the mouth and jaw receive the movement data.

-

Repeat the same steps above to add head movements.

Real human expression.

Only the head receive the movement data.

- Repeat the same steps mentioned until the expressions are applied to the character layer by layer. You can produce custom expressions using this method.

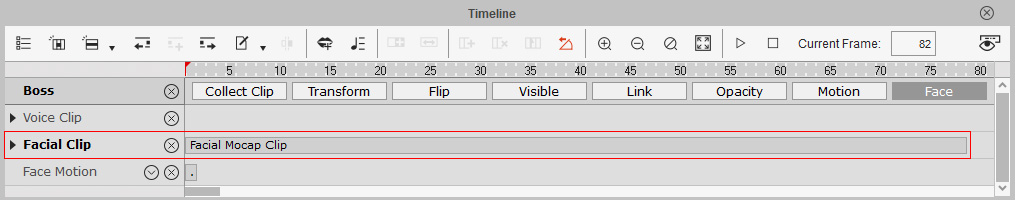

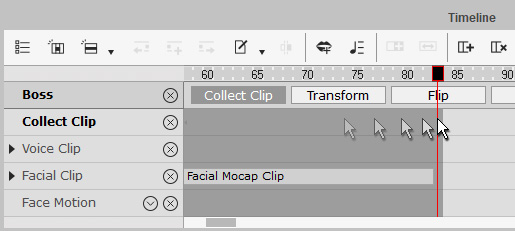

Open the Timeline panel and click the Face button,

you can find the recorded facial mocap data stored in a Facial Mocap Clip in the Facial Clip track.

You may collect and export the facial mocap clips, then apply to other characters. ( View video )

- Click the Collect Clip and Face buttons to show the Collect Clip and Facial Clip tracks at the same time.

-

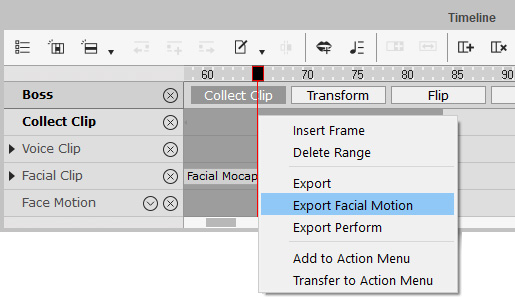

Drag to make a desired range in the Collect Clip track to collect the current facial mocap data into a clip.

-

Right-click within the range and select Export Facial Motion in the popup menu to save your facial mocap clips in *.ctFCS format.

- Select the character which you want to apply the facial mocap data.

- Open the Timeline panel and click the Face button to show the Facial Clip track.

-

Right-click on the Facial Clip track and select import to load the exported facial mocap data.

The character will then start the face animation.

Note:

Note:If you want to further adjust the captured facial expression clips, then please refer to the Five Approaches to Generating Facial Expressions section for more information.